Introduction

By now I am pretty sure all of you have already heard of ChatGPT, Midjourney or any of the other artifical intelligences you can now talk to or make use of. Where it got interesting for me, was in early 2023, when Adobe released a Photoshop Beta version with AI features and staggering capabilities. Over the past months I have been using it (for professional work and private stuff) so I wanted to show you some examples where it was useful to me and also how it works.

One thing is for sure, this will vastly disrupt image editing workflows.

Contents

Removing unwanted objects

This is for me the most useful option. You might have already used content aware fill in the past and if you did I guess you have a similar feeling about it that I have: sometimes okayish but most of the time not really.

We will use this picture as an example. When I took it I wasn’t even sure if I should bother to press the shutter button, as there are many distracting elements in the background that are at first sight not easy to get rid of.

But let’s first see how things work with the content aware fill. I marked the pole and the car behind it and let it do its job, this is what it came up with:

The car is gone but there are plenty of issues. Somewhat that advertising poster was used as sample area and the buildings in the back also look odd. When looking more closely you would also notice, that the lines on the ground are wrong and don’t fit the perspective. Fixing this properly with content aware fill, it would take some time. I would probably need ~ 2 hours.

Now the new AI-based generate fill is something else entirely. It can analyze a scene with regards to light direction, shadows, size relations, depth of field, perspective and content. I again mark pole and car, just like before, and all I do now is write in that box “remove car and pole”:

And this is what the new generative fill comes up with:

You usually get three options to choose from, but if there is none that you are happy with you can let the AI generate more. In 99% of cases I was already very happy with one of these first three options though.

Compared to the content aware fill we see huge differences: perspective and lighting look flawless, the buildings have been completed in a way that makes sense and everything simply looks real.

I did clean up some further areas, the only one that didn’t work right away was removing the license plate. Here I needed two steps, but in total I needed about 5 minutes to get to this point. It would have been hours without this new technology.

Examples

This one I didn’t even expect to work out well. I told the AI to remove the pole as well as the lock and it was even able to generate something as complex as an out-of-focus bike wheel.

Also removing this rainbow artefact was easily possible by just marking it and entering “remove lens flare”. Another useful application I could think of would be to remove sweat stains a on shirt, an otherwise tedious and time consuming task.

Extending the Canvas

I am not sure if any other AIs offers this feature yet, but I was also amazed by it. You take an existing picture, extend the canvas size and let the Photoshop AI fill in the blanks around your picture.

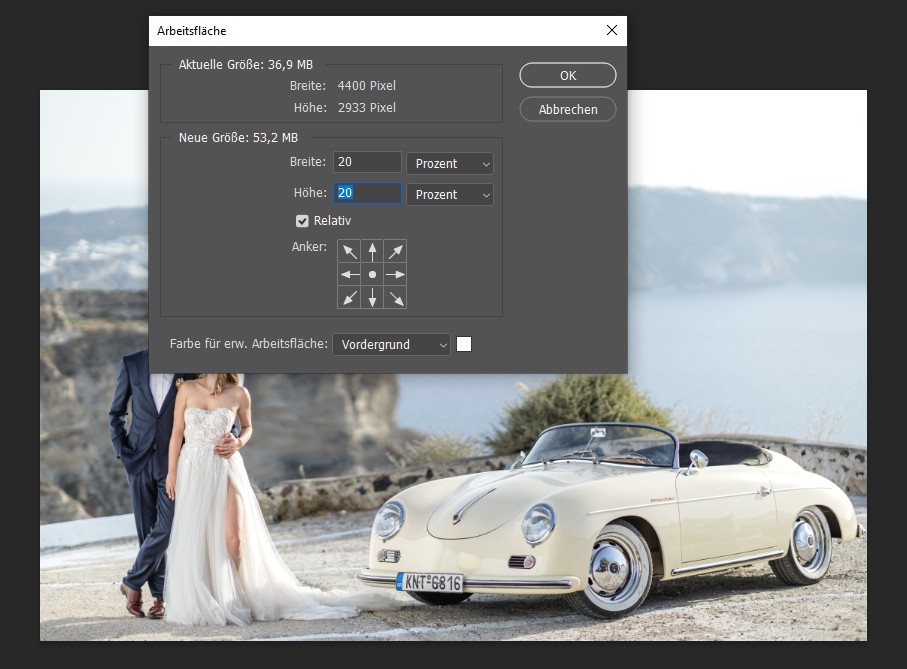

This is a good time to tell you I wasn’t super happy with my wedding photographer. There were plenty of pictures with too tight framing where something was missing and I wasn’t quite happy about it, so we use one of those as an example.

The car is slightly cut off on the right side and the framing is also too tight at the bottom, so we load this picture into Photoshop and extend the canvas by 20% in all directions:

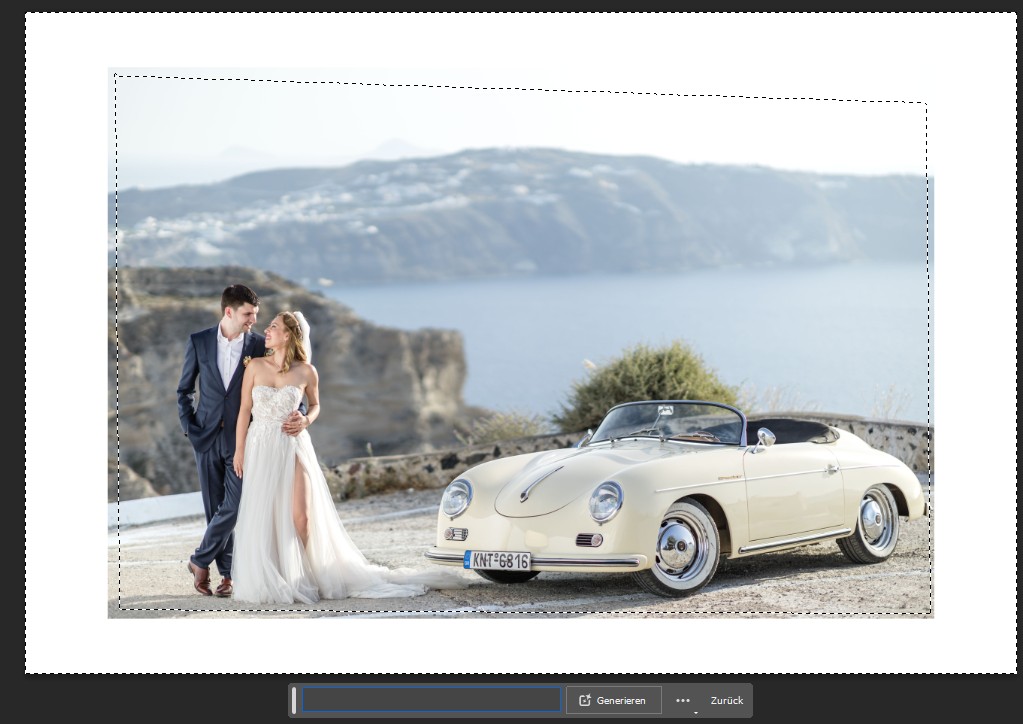

We again mark the outer area:

When it comes to extending the canvas I had the best results by not giving any prompt, so I just clicked on generate and was presented with the following three options:

Let me first say, I think all three results are astonishing. I decided to go with the second one, as the wall on the right looked best to me and this is the final picture (or the picture as it should have been) after some minor adjustments:

For weddings, reportage, or whenever something has been cut on the edge, this feature can easily turn an otherwise unusable picture into an actually good one.

Now if you look at a 100% crop from this picture you can see the seam between the original and the generated part, but if that is the only complaint…

I used this feature with a lot of pictures from my archives to see how it works, a few I will share with you here, moving from easy to difficult – at least from my point of view, maybe the AI sees things differently.

Examples

Now with that repeating background this might not look that astonishing at first sight, but the AI recognized the pattern of having a vertical pillar between 7 columns of windows, and that I find quite astonishing.

Thinks are starting to get more interesting here: a defocused complex background with a lot of perspective distortion. Here I first noticed that the AI has some issues with hands. Hands often look distorted and wrong, sometimes even with too many or too few fingers, so I went with this one where you only see the arm, but not the hand.

Now here I find the result spectacular. Unusual, complex out of focus background and I cannot see anything that is wrong with the generated part.

Adding elements

So far we talked about removing unwanted parts and extending already existing parts, but elements can also be added. Personally, I don’t have much use for this application, but I can see people using this a lot for stock photos or advertising purposes.

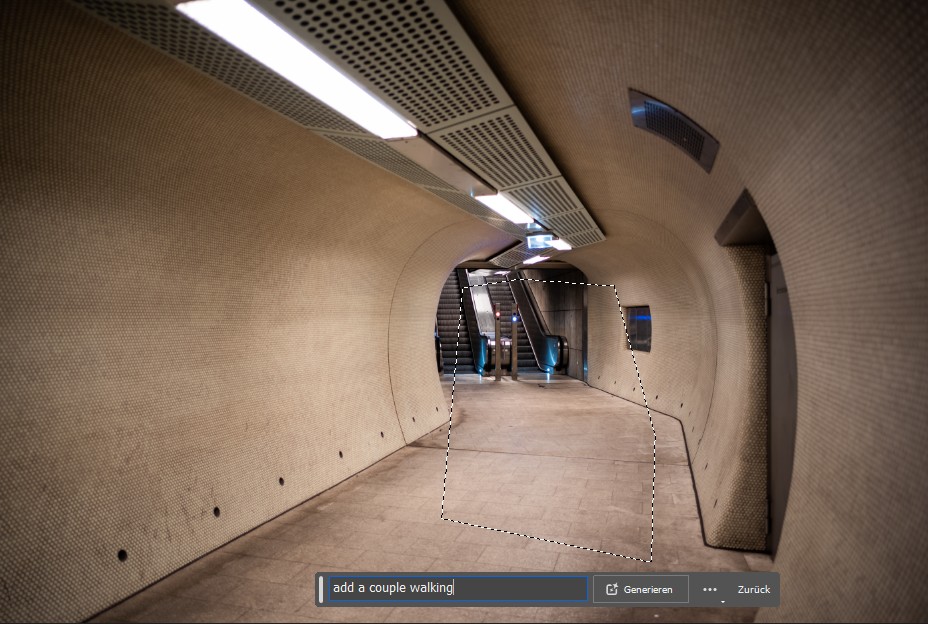

What if we want to see some people walking threw this tunnel? I marked the area and told AI to “add a couple walking”:

At first sight, one of the three options looks pretty convincing actually:

Now if you look more closely, you start to see some real issues here:

As said, with human proportions this AI is not doing a perfect job (yet).

Also here I was looking for a few possible candidates in my picture archives again.

Examples

In this scenery I always found the sky a bit messy, so I marked it and told AI to “add dramatic sunset sky”. Pretty convincing result…

Talking about my wedding pictures again, I took this picture of my wife only, because the photographer didn’t understand the concept of a “silhouette”, which is why I was never in that picture.

I marked the area next to my wife and told AI to “add silhouette of a groom next to the bride”. Neither me nor my wife think that guy looks like me, but if someone was looking for a stock photo like this, I think it would pass.

Conclusion

I am pretty sure I have only touched the surface of what is possible with this new technology in Photoshop, but it already changed my workflow forever: the generative fill has immediately become my go-to option for removing unwanted objects and by extending the canvas I could save some pictures with imperfect framing that really bothered me.

Now when it comes to adding objects that haven’t been in the scene to begin with, this does not only raise the question if we are still talking about photography or rather digital art, it may also raise further moral/ethical questions, but I will quote another article of mine here: Every picture that has ever been taken or will ever be taken can be (re)created in Photoshop today.

AI just made Photoshop a lot easier to use and more accessible now.

To me this AI based processing is the biggest leap in the history of Photoshop we have ever seen, if not in digital editing in general. And I am sure this journey has barely just begun.

Further Reading

- Discuss the usage of AI with our Discord community

- All our lens reviews

- Bokeh explained

- Technical Knowledge

Support Us

Did you find this article useful or just liked reading it? Treat us to a coffee!

![]()

![]()

![]() via Paypal

via Paypal

This site contains affiliate links. If you make a purchase using any of the links marked as affiliate links, I may receive a small commission at no additional cost to you. This helps support the creation of future content.

Latest posts by BastianK (see all)

- Review: Nikon 135mm 2.0 AF DC-Nikkor - June 2, 2026

- Recent Guide Updates - May 29, 2026

- Review: Thypoch 35mm 2.0 Ksana - May 21, 2026

Thank you very much for yet another very excellent review. I don’t have any experience with the latest Photoshop but often use Luminar 4 and Luminar AI (tempted to try Luminar Neo). Just minimal use of the AI functions can greatly improve some images. Luminar calls the additive functions “creative” which can work wonders. However, I fully agree with you that there are moral/ethical issues in heavily manipulated images. I’m in Central Texas but have family and friends in the Stuttgart and Nuernberg areas so can at least relate a little to your beautiful locale. Thanks again and keep up the great work!

mfg,

W. H. Lemcke

Who owns the image rights… You as a photographer, -or??

AI would not work without already pics taken in the real world…. are all those pics out there free for AI to use or??

Who owns the image rights?

Just some questions that we all need to start to think about.

Well, Shutterstock did give me a few cents here and there because Adobe have included my images in their training data. That’s surely the case with most people with accounts there. They didn’t even pay the price of a regular download (talking about cents to begin with…), not that anyone was consulted (the power of ever changing TOS). They surely use everything available via Google and other search engines, so yeah, it is a legal (not sure even if that) stealing, and almost everyone working with AI does it. Perhaps some day they will get sued, then settle for like 100 mil after earning billions, and the world goes on. I’m glad I’m a mostly a hobbyist, because being a pro photographer is humiliating in so many ways these days. Gotta have thick skin. Like when Shutterstock charges 1+ eur for OUR photos and gives us 10 cents. because of covid no less (and it didn’t even affect their business negatively, rather the opposite, plus it’s 2023 already). AI just takes this to a new level, stealing stuff without pretending. Copyright makes less and less sense anyway, the way it works.

I would not call it stealing what the AI dors when generating images. I would call it painting. It is not producing a 1:1 copy of an existing image. It is painting a very detailed image of real world objects and put them all together with some random textures and a gloss of staged lighting. It is like a very skilled Photoshop artist that knows how to paint clouds, cars, bridges or polar bears

. It is not relying on a huge database of images as you might think. This is only needed during training. In production it only relies on its own „brain“ with descriptions how different objects look. Just we all do too. You can close your eyes think of a cat and see some random picture of a cat. Not one you have seen in the past but an image your brain generated for you. And that is how AI is painting pictures too.

The ability is fascinating and all, but I really am discouraged by how quickly technology is undermining the value and reward of taking good pictures. Now you can, with increasing ease, confabulate anything you want into a picture at the expense of the art of faithfully depicting real and rare instances of beauty. Maybe it has its upsides in photography for reportage/marketing though ¯\_(ツ)_/¯

Like, why take a picture of landscape under rare lighting or astronomical conditions when you can just add it later?

Or as another example take the nature photographers, who could wait hours, sometimes days and i occasions weeks or months for a rare specie to appear in certain place and also under dramatic weather and light conditions to take a great and unique picture. Now, Take a picture in the scene you want, the hour you are there whatever light it is, then with AI add the light, sky and weather condition you want that may happen only once a year , then add the animal, voilà, you got it.

Martin: I agree, and extend it a bit and remove the take-a-picture-to-use-as-base part and just prompt for a selection of base images matching some parameters. Then the entire venture becomes a different discipline that doesn’t involve a camera or a sensor. As a prompter you can create breathtaking things from the comfort of your home..that doesn’t mean I will stop being outdoors in all weathers with a camera though; I like being outdoors in all weathers.

I kinda wish there was a way to show how prompted a photo was since photography doesn’t have to intersect with the prompt. Maybe it comes down to faith in the producer of images, in the end. Or perhaps photograph merely for your own amusement.

i miss a policy that all that AI-generated content is visibly marked as such, i.e. by watermarks all over the frame: “I’m a lie generated by XYZ. This is based on training material, taken (stolen?) from 1000s of unwilling contributors, attributed by an unknown army of underpaid clickworkers.”

Very interesting piece! I’ve used the canvas extention option quite a bit myself. It’s very useful to make a composition look more balanced etc.

Upscaling photos or noise reduction are also a standard part of my workflow honestly. It has really changed the game, but I’m not sure if it’s really AI or a smart piece of software.

Very interesting… You can’t be be a professional photographer today and ignore these tools to fabricate the perfect pictures for your market. It expands “photography” picture making into a wider practice of image craftsmanship and an intellectual process. It is also adding work to the process of development and post production.

In the end, it changes little – a good picture will still be a good picture, regardless of the technique employed.

As a hobby amateur it is not going to change my experimental fun in enjoying various lenses and capture scenarios. If anything it tells me to revisit my corpus for pictures that had flaws but could be resolved with this and turned into glorious large prints.

Hi Bastian,

This is likely just the infancy of what will probably have a profound influence on photography. I believe how we look at photography will change, for example in how it has a validity as evidence.

Ethical implications aside, do you expect the new software possibilities to affect the choice of lenses. For example, I have the FE85/1.8 but am considering changing to the 85/1.4 GM for the better bookeh. Do you think similar results can (easily) be achieved by software?

Not in the very near future, but I wouldn’t be surprised if in a few years you can buy profiles recreating the look of specific lenses like you can buy presets for colors and curves now.

Hmm, im not sure what to make of this yet. Getting rid of objects without traces and similar edits are that we’re alot od work before is surely useful. My approach to photography is mostly about conserving memories though and when I’d start adding content that wasnt there I’d feel like betraying myself, and the photo becomes kind of leaa meaningful to me.. it’s very exciting ro have the option though.

Thank you for the great written article.

When I started taking pictures at the age of 11, with a camera from Foto Porst (Fuji), and exposed on 35mm color film or black-and-white film, there were of course numerous pictures that were not processed further. Either the picture was cropped incorrectly, someone ran into my photo, I overlooked a small detail at the edge of the picture or the light did not fit after all.

Annoying, but this belonged to photographing.

Of course, I also edited my pictures, for example, by brightening shadows in the darkroom, combating dust or editing the image detail. If I wanted to change contrasts, appropriate filters were used for black and white photography.

If I was shooting on color stock, then I had to decide beforehand whether I wanted to use a more saturated or a natural-looking color film.

So I often had two cameras with me, or cameras with interchangeable magazines.

Of course, this is all much easier to do in digital photography.

But I also only use these equivalent steps or working methods in digital photography.

And with that, quite a lot of image improvement is already possible.

Perhaps I am already very old-fashioned with my somewhat over 50 years, but this is and remains my kind of the photography.

Of course, everyone is allowed to have his own opinion and to create and edit his pictures accordingly.

These are just my personal thoughts on the subject of photography.